Software Engineer · AI Systems

Murad Al-Balushi

I build the control, evaluation, and safety layers that make LLM systems reliable enough for production.

Professional Experience

360Remit

Software Developer

Muscat, Oman

Jan 2025 – Mar 2026

- Owned end-to-end VAPT for a regulated fintech platform as the risk authority between vendors and engineering; validated findings, cut false positives 40%+, closed 100% of critical issues pre-launch, zero security incidents at go-live.

- Engineered vendor synchronization pipeline for 500k+ records (delta detection, conflict resolution, bidirectional sync), cutting manual processing from 3–5 days to under 5 minutes with 100% DB integrity.

- Delivered MTO, eKYC, and AML integrations and designed phased infra (DR, capacity, data residency), enabling platform launch within regulatory deadlines while unblocking user-facing onboarding flows.

- Built SQL/Python humanization pipeline converting 500k+ vendor records to presentation-ready data, eliminating manual cleaning and cutting prep time 95%+.

Highlight Projects

A selection of projects I'm particularly proud of

AI Code Generation Evaluation Engine (Code Arbiter)

Execution-based benchmarking — run the code, classify the failure

Replaced subjective LLM code review with deterministic execution-based validation. Runs generated code in isolated Docker sandboxes, classifies failures across syntax, runtime, logic, and temporal reasoning, and benchmarks multiple models under identical conditions.

CostPlan – LLM Cost Enforcement Proxy

Open-source circuit breaker for autonomous agent API spend

Built an open-source transparent proxy that enforces per-call and per-session budget limits on LLM API calls, with cache-aware pricing and zero-latency SSE streaming — preventing unbounded spend in autonomous agent workflows.

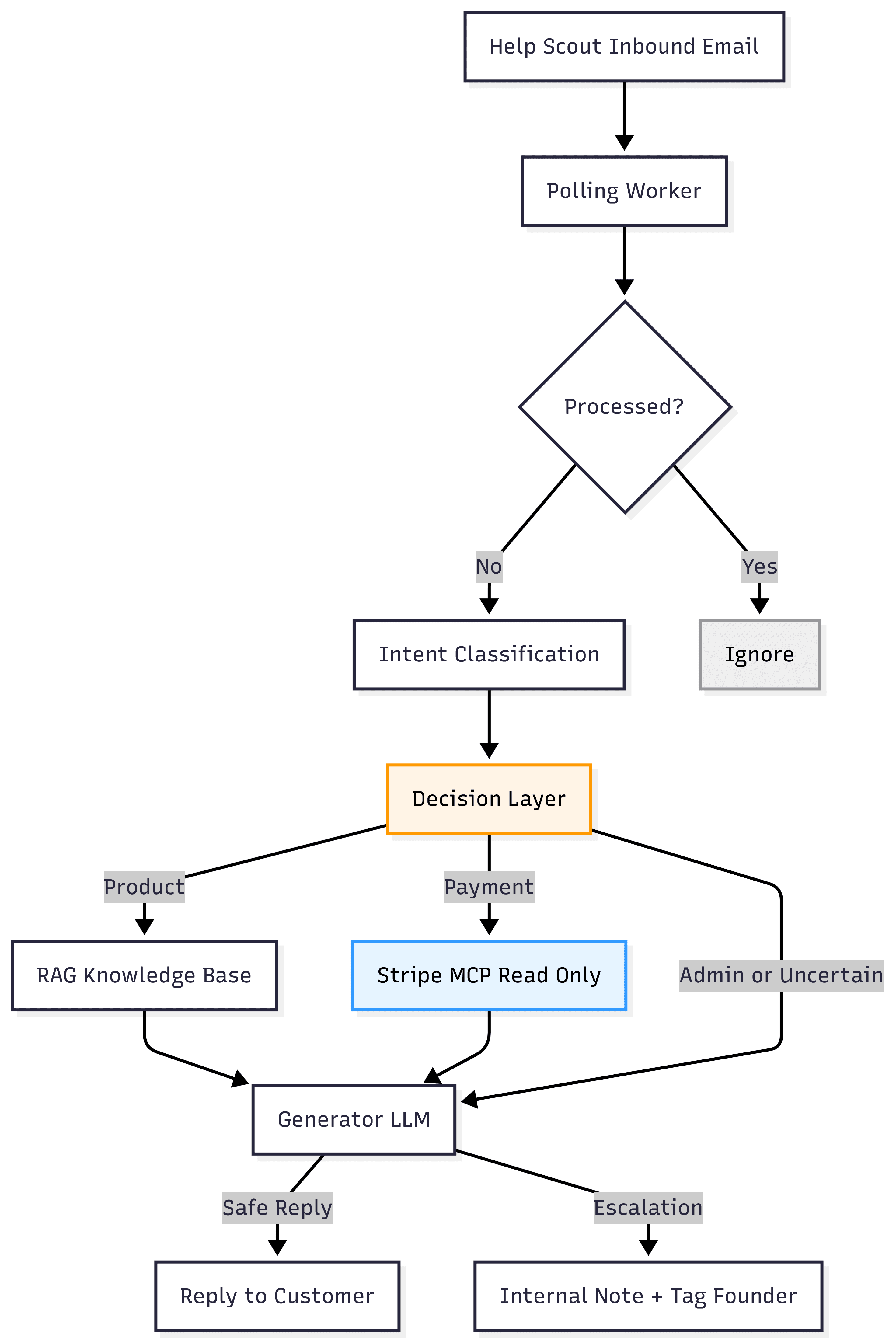

Production AI Support Agent (Guardrail-First)

Risk-aware LLM-powered support agent reducing customer support load

Deployed a guardrail-first AI support agent handling live customer tickets with Stripe-backed context and deterministic escalation logic, designed to fail safely under uncertainty in a production SaaS environment.

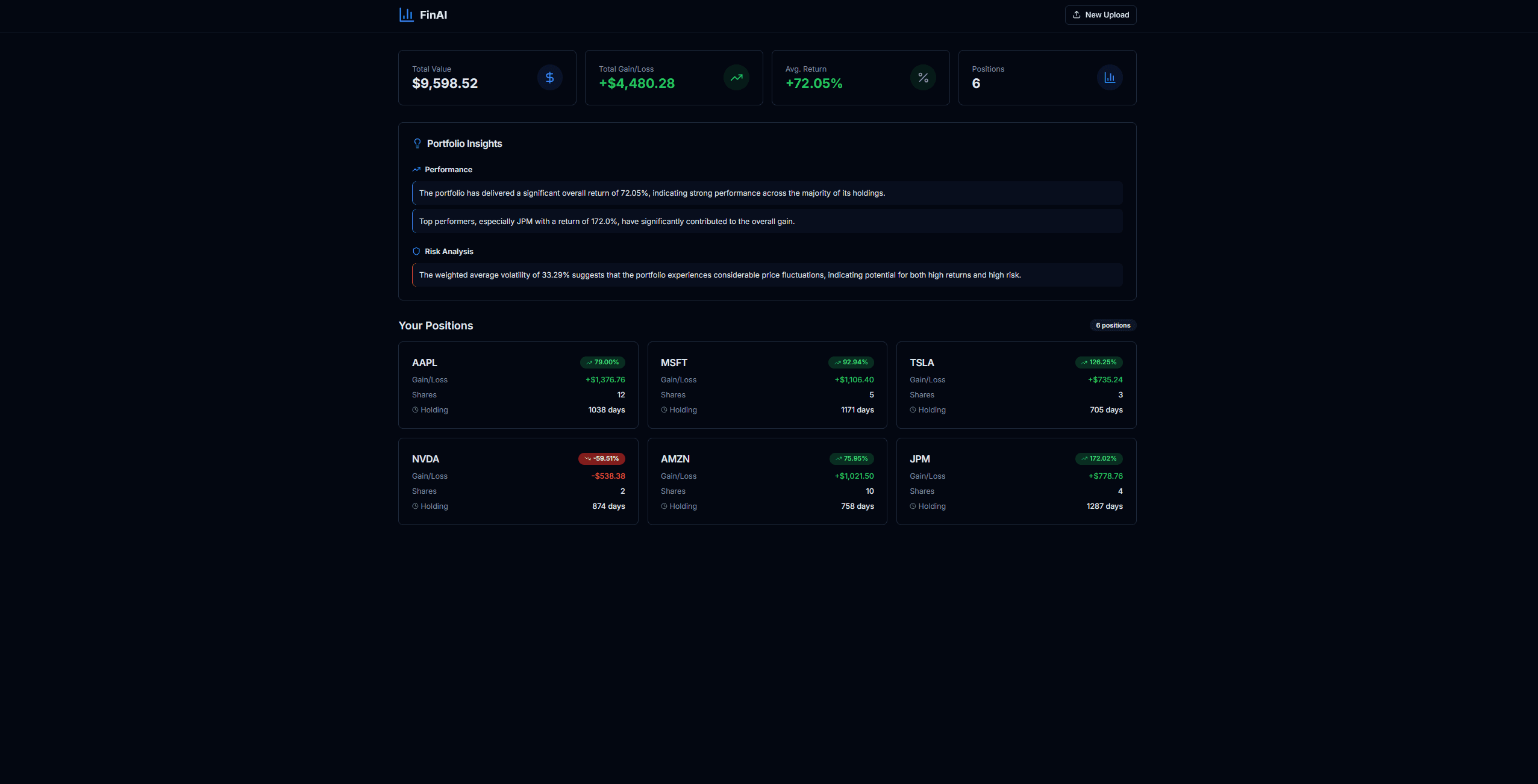

FinAI – AI Portfolio Analysis & Decision Support System

Compute-first financial analysis engine with constrained LLM interpretation

Built a compute-first financial analysis engine combining deterministic portfolio metrics with constrained LLM interpretation to deliver grounded, non-speculative decision support.

Technical Skills

Technologies I use to build, ship, and evaluate production systems

Languages

AI & LLM

Backend

Infrastructure

Databases

Tools & Integrations

Let's Connect

Open to opportunities, collaborations, and interesting problems.